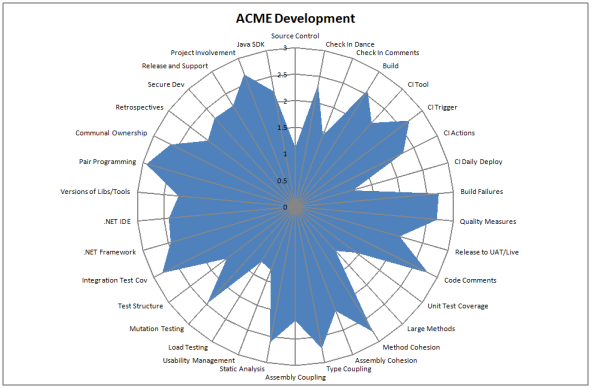

Quality Radar

Now I’m back on the blogwagon, I though I’d let you know about one of the things I’ve been doing over the last couple of months.

As useful as standards and guidelines are they are very much based in the here and now. What is missing is a statement of the goals for your development practices over the next year or two. Where do you and your organisation want to be over this time frame? To that end I created a mulitple choice survey with each question having 4 answers. The questions used the following scoring system

- 0 = The practice, tool or what-not mentioned in the question is not used / followed

- 1 = It is used / followed, but in an obsolete manner

- 2 = It is used / followed according to current standards

- 3 = It is used / followed according to our future goals

The questions fitted into five broad categories

- Configuration Management

- Tools

- Practices

- Code Quality

- Testing

If you take the averages across all of your development teams you can chart it like so:

At a glance you can tell whether standards are being meet, software quality aspirations are being achieved or that certain aspects of your development practices are below par. The more of the circle that is filled, the better you are at acheiving your goals. When you get scores mainly falling between two and three it is time to raise the bar.

This information is useful in a couple of ways. If you plot the scores in ascending order you can tell what thinds need to improve and spend your effort on them, based on their priority. You can group this info into categories to ascertain if you have any problem categories.

You can also plot individual teams against the average. This enables you to hightlight to all devs what a particular team is good at. It also lets teams know where to get help from other teams who have a higher score for that particular practice.

If you re-run every quarter or so, you can evaluate how the organisation has changed, identifying what worked and what didn’t. It’s basically a retrospective at a higher organisational level.

Quality in the Real World

We have been producing code quality dashboards at work. They are produced by our CI server whnever a successful build is produced. This allows developers to get quick feedback on the quality of code they have just commited. We can compare projects and raise their quality over time. This is all very well and good but wouldn’t it be nice to see how we are doing compared to the outside world?

So without further aso I present to you 4 code quality dashboards for a selection of .NET open source projects [and one MS one!]

As you can see quality is variable with nmock and ASP.NET MVC doing well. Fifty percent unit test coverage for nunit is a bit shocking however!

The Ultimate Code Smell

Bob Martin has been thinking about adding a new element to the agile manifesto around producing quality rather than quantity. He’s described this as ‘Craftmanship over Execution’. To back this up you can follow the instructions here and measure the amount of WTF’s per minute. A great idea for a metric, but hard to automate. Maybe an idea for a new startup; provide metrics for code in the same way third party companies perform penatration testing.

Quality Testing

As part of a continuous integration cycle most people consider running unit and integration tests. Some even consider running automated acceptance tests. Fewer still focus on code quality tests. To ensure code is maintainable requires a certain amount of effort as the code changes. I think this is what the refactor stage of the TDD red, green, refactor cycle alludes to. As well as refactoring code to remove duplication, there are other considerations to be made with regards to maintainability. We use six indicators to give a finger in the air estimate of the maintainability of a code base. The indicators we use are as follows:

Unit Test Coverage High test coverage is a good indicator of whether a TDD approach is being followed, and if not an optimistic percentage of the chance of a bug being caught. Said another way, If a bug is introduced into the code the chance of it being caught is at best the percentage of code covered by tests. This very much depends on the quality of the tests, but if you only have fifty percent coverage and introduce a bug, it’s a coin toss whether it’s detected. If the tests are poor the real figure is much lower than fifty percent.

Percentage of large methods Fairly obvious this one, but large methods are harder to maintain because they contain more code. There is more scope for error, less accuracy for identifying the cause of any error [any unit test covers more code] and a greater chance that the method is breaking the single responsibility principle giving it more than one reason to change. What you consider a large method is up to you, but we have been using ten lines of code as our measure.

Class Cohesion For a class to be cohesive all methods should use all fields. We use the lack of cohesion of methods henderson-sellers formula to measure this one. If a class isn’t cohesive it’s an indicator that unrelated functionality could be split into it’s own class. In other words it has more than one reason to change and is therefore breaking the single responsibility principle.

Package Cohesion For a package or assembly to be cohesive the classes inside the package should be strongly related. This is a measure of the average number of type relationships within a package. Low cohesion suggests that the types can be split into seperate packages.

Class Coupling This is a measure of the number of types that depend on a particular type a.k.a. afferent coupling. If a high number of types depend on the class in question, making changes to it will be hard without breaking lots of client code. There are a number of reasons why this might occur. Responsibility for one aspect may be split among multiple classes, but more likely you don’t have a losely coupled design.

Package Coupling This is a measure of the number of types outside this package or assembly that depend upon types within this package. One possible reason for high coupling is a packaging problem – things that change together should stay together. Another reason is that the packages in question have many responsibilities.

I’d love to hear feedback on the way you measure the maintability of code.

Mono Cecil, Visited and Observed

As I mentioned in a previous post, Mono Cecil is library that lets you load and browse the types of a .NET assembly. For a simple [but potentially useful] look at what you can do I’ll show you how you might go about listing all of the methods in an assembly. What will our test look like? Well, we will start with the assertion that the number of methods returned is what we expect:

[Test] public void ShouldReturnNumberOfMethodsTest() { Assert.AreEqual(expectedMethodCount, actualMethodCount); }

Pretty simple so far, but we have a couple of design decisions to make to complete our test code. I’ll call our class that does the work AssemblyExaminer. It will need to expose a list of methods so I can get the count. It will also need to be passed an assembly as input. To avoid subtle state related bugs we will pass this in the constructor and make our class immutable. Looking at the Mono.Cecil namespace the AssemblyFactory.GetAssembly method has three overloads. One takes a filename, one a byte array and the other a stream. For our purposes a stream provides us with the best level of abstraction. With all that in mind here is our [almost] completed unit test:

[Test] public void ShouldReturnNumberOfMethodsTest() { const int expectedMethodCount = ?; using (Stream testAssembly = GetTestAssembly()) { AssemblyExaminer examiner = new AssemblyExaminer(testAssembly); int actualMethodCount = examiner.Methods.Count; Assert.AreEqual(expectedMethodCount, actualMethodCount); } }

Two things remain. One is simply replacing the ? with the number of methods I expect to find. The other is spinning up the stream containing the assembly. I have called the method that creates the stream GetTestAssembly. We’ll see how to implement that in a minute. Continue reading

Refactoring Made Easier

JetBrains TeamCity is a build management and continuous integration platform which supports .NET and Java. Having set up TeamCity and played with it for a couple of weeks, I’m very impressed by the slick UI and features provided out of the box. These include a set of code quality features. Even better is the fact that the professional version is free.

One of the things that really impressed me is the duplicates finder. As the name suggests it detects duplicate code and currently works with Java, C# [up to 2.0] and VB [up to 8.0]. This helps you target the areas that need refactoring.

Alongside the duplicates [Java example above] a ‘cost’ is calculated. I’m not sure of the algorithm used, but it seems fairly sensible and the cost has some relation to the amount of code that is repeated. You can use this to help prioritise your refactoring. To setup your build simply set the runner to be the duplicates finder. Continue reading

The Complexity Implementation Tangle

Using relative complexity in your estimation is quite an effective estimating technique. To do this you would normally associate a complexity score to each feature or story. The scale a lot of people used is based on the fibonnaci sequence where next n=previous (previous (n)) + previous (n). You start with 0 and 1 to obtain the following sequnce 1,2,3,5,8,13 etc. You assign points based on the relative complexity where a 2 point story would be roughly twice as complicated as a 1 point story. The power of this approach comes from the comparison – we seem to be naturally better at this than assigning absolute values.

If you take this approach it is very useful to measure how long each story took to implement. With this information in hand you can draw a picture similar to the following

On the top side of the diagram you have the complexity points, scaled appropriately. On the bottom side of the diagram you have implementation time again scaled appropriately. If you plot all of the features on the bottom in the correct position with regards to the time to implement them and draw a straight line back to the complexity point estimate [I know my picture above doesn’t use straight lines, I’ll update the post with a better example] you’ll end up with something along the lines of the diagram above.

In an ideal world all of the features that were estimated as 1 complexity point will have taken you less time to develop than those you estimated as 2 complexity points and so on. Where the lines tangle [cross each other] suggest where your estimation could be improved. Your retrospective can be used to address such issues.

The Chicken Dance

Well, that’s what it sounds like when my antipodean friends talk about the process of checking in code. Seriously though, development is a complex beast as you realise when you start talking in detail about any of the constituent tasks that a developer is responsible for. Take developers testing responsibilities as an example [which I conveniently ran a workshop on last week]. These break down into the two broad areas of verification and validation.

A workshop to figure these things out is a very useful tool and one that I would recommend at your workplace. It is truly incredible the depth of coverage you can get in a topic with ten people sitting around the table. We spent 5-10 minutes where everybody wrote down each responsibility or task they could think of on a separate post-it. We went through a whole pack of post-it notes [admittedly with a bit of duplication]. We then took it in turns to stick the post-its on the board, roughly grouping and recognising duplicates as we did so. I’m currently busy writing these up for our internal wiki. Making developers aware of the broad range of testing tasks and supporting this with automation of acceptance tests, unit tests etc. will really help improve the quality of the code delivered to the QA teams and eventually the customer. Ideally everybody will pick up on this and you will end up with a zero defect culture.

Standards and Guidelines are Useless

That is quite a bold statement I just made, but bear with me. I’ve come to the opinion that all standards, guidelines and best practices are useless if they only exist in a document somewhere. Invariably this document gets lost down the back of the enterprise sofa weeks after its initial distribution. Developers stop refering to it, it goes out of date and new developers are unaware of its existence.

The good news is that this problem can be solved quite simply. Automation is the answer and it places even more importance in having a sensible CI [Continuous Integration] strategy. All of the things that are truly important to the quality of your code can be automated. Standards and guidelines can be checked. By integrating these checks into your build platform developers receive regular feedback that they are more likely to act upon. Nightly builds can be set up to provide a full suite of information about adherence to standards and guidelines along with build health. These can include for example the standard stuff such as unit test coverage alongside naming convention, web accessibility & code readability checks. When the standard or guideline is changed developers get feedback about this at worst by the next day.

By eliminating waste in the feedback loop and ensuring that code is tested against the standards in place, you will quickly start to see the real effect of these standards and take an agile approach to evolving them to improve your codebase.

The 56 Complexity Point Dash

Tracking your progress is traditionally done through the use of burn up / burn down charts. I’d like to suggest an alternative – The Sprint.

As you can see it resembles a real race where the length of the course is the sum of the complexity points you intend to deliver for that sprint.

The running line up is:

Lane 1 [Green] : The Pacemaker. Each day she runs the same amount and crosses the finish line at the end of the sprint.

Lane 2 [Red] : You / your team. Every time you complete a feature you can move your runner forward by that amount.

Lane 3…N [Blue and Yellow] : You / your team for the last N sprints. Progress as measured in a previous sprint.

If this information is captured every day, it’d be fairly trivial to track your progress in a visual manner. Simply printing each day and flipping the pages would give you an animated view of your progress throughout the sprint. You can see how far off the pace you are and how you are doing compared to previous sprints. You could even create an animation and send it to the team each day.